Robust detection of lips in face images (Status: Completed)

The prerequisite to lip shape modelling is that the mouth region in a face image is reliably detected and lip pixels extracted. The current implementation of the lip detection, modelling and tracking system uses a very simple technique of mouth region extraction based on a coarse face model. The aim of this task will be to investigate more sophisticated techniques of image segmentation and object detection, such as those based on local binary masks for texture representation and AdaBoost trained detectors.

Final Description:

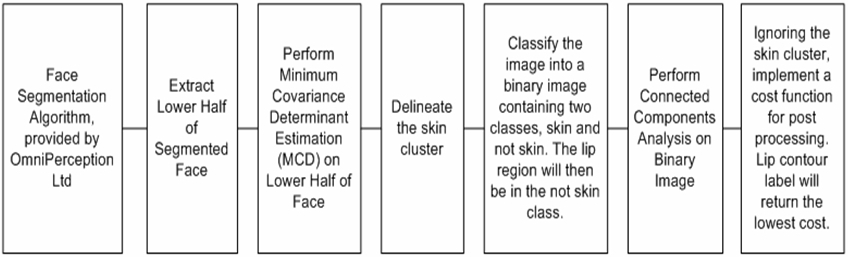

A variety of techniques were studied in the literature to solve the problem of lip region segmentation. A novel technique for lip segmentation has been proposed. The system description is shown in Figure 1.

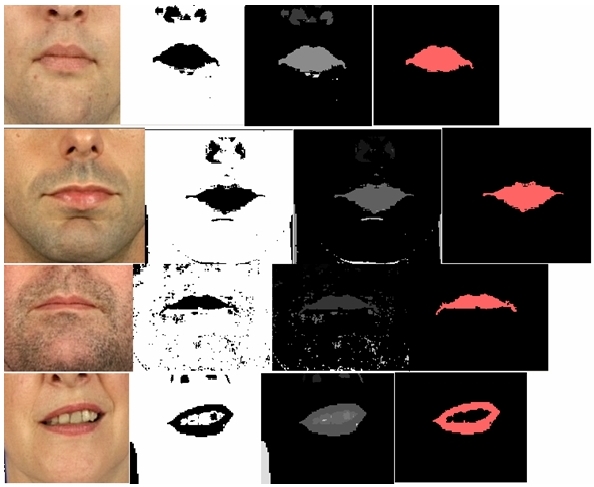

The system makes use of a robust statistical estimator, called the Minimum Covariance Determinant Estimator to delineate the largest chromatically correlated region the lower half of the face. A binary system can thus be created consisting of the dominant cluster and the remaining pixels. Performing connected components analysis on this system, followed by a simple cost function implementation (based on distance from the image centre and the area of the connected region) yields a reliable classification of the lip region. The example results are shown in Figure 2.

The above results show example images from the stages in the processing in the following order:

- Lower half of extracted image.

- Binary Image.

- Labelled image with connected components.

- Extracted lip region.

The remainder of the tasks are briefly described in the next few sections.

Lip Shape Modelling

The system developed previously within the CVSSP uses splines to create lip shape models. Currently, the location of the nodes of the model during fitting is purely data driven. This may result in unrealistic models of lip shape being generated. The aim of this task will be to develop a statistical model of spline node interactions and use this model to enhance the process of spline fitting.

Lip Shape Tracking

The current system fits a lip shape model on a frame-by-frame basis. Clearly, the motion of the lips can be predicted to a certain extent, from the knowledge of their past evolution. For instance, when the mouth starts opening, it is likely to continue opening. When it reaches a fully open state, it will be expected to start closing. A dynamic model of lip shape expressed in terms of a Kalman filter, particle filter or Hidden Markov model should be able to capture the lip dynamics. The aim of this task is to explore the use of some of these models to exploit the temporal context in lips dynamics to enhance the quality and speed of the modeling process.

Comparative Evaluation

The proposed approached in the above tasks can be compared with other approaches such as the face appearance model which fits and tracks the complete face simultaneously, as well as approaches which model lip dynamics in terms of mouth region pixel motion patterns. As far as possible, the comparative evaluation will be carried out using publicly available software, or in collaboration with research groups elsewhere.

Evaluation in Biometrics

The lip shape modeling and tracking system will be evaluated in the context of personal identity verification. Two multimodal video databases, namely the XM2VTS and BANCA databases are available for use. The biometric models of subjects based on the lip dynamic properties will be evaluated using the standard evaluation protocols defined for these databases.

Evaluation in Man-Machine Interface application

The lip modeling and tracking system will be evaluated either in face expression recognition or in speech recognition. If we opt for the latter application, a commercial speech recognition system will be used for the study, with acoustic information replaced or augmented by lip shape dynamics.