Dynamic Surface Animation using Generative Networks

João Regateiro, Adrian Hilton and Marco VolinoCentre for Vision, Speech & Signal Processing University of Surrey, United Kingdom International Conference on 3D Vision (3DV) 2019

Abstract

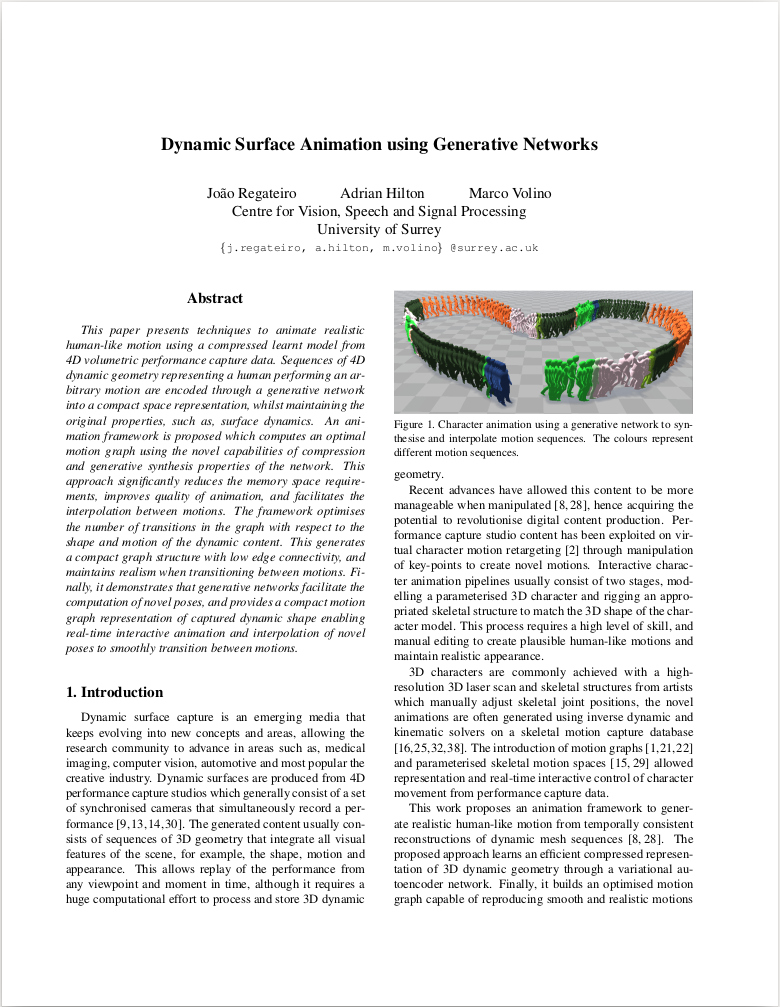

This paper presents techniques to animate realistic human-like motion using a compressed learnt model from 4D volumetric performance capture data. Sequences of 4D dynamic geometry representing a human performing an arbitrary motion are encoded through a generative network into a compact space representation, whilst maintaining the original properties, such as, surface dynamics. An animation framework is proposed which computes an optimal motion graph using the novel capabilities of compression and generative synthesis properties of the network. This approach significantly reduces the memory space requirements, improves quality of animation, and facilitates the interpolation between motions. The framework optimises the number of transitions in the graph with respect to the shape and motion of the dynamic content. This generates a compact graph structure with low edge connectivity, and maintains realism when transitioning between motions. Finally, it demonstrates that generative networks facilitate the computation of novel poses, and provides a compact motion graph representation of captured dynamic shape enabling real-time interactive animation and interpolation of novel poses to smoothly transition between motions.

Paper

Dynamic Surface Animation using Generative Networks

João Regateiro, Adrian Hilton and

Marco Volino

International Conference on 3D Vision (3DV) 2019

Citation

@inproceedings{Regateiro:3DV:2019,

AUTHOR = "Regateiro, Joao, and Hilton, Adrian, and Volino, Marco",

TITLE = "Dynamic Surface Animation using Generative Networks",

BOOKTITLE = "International Conference on 3D Vision (3DV)",

YEAR = "2019",

}